Deployment

❗Important: The library/binary generated by eIQ Time Series Studio is licensed exclusively for use on NXP devices. It must be implemented and deployed solely on NXP products.

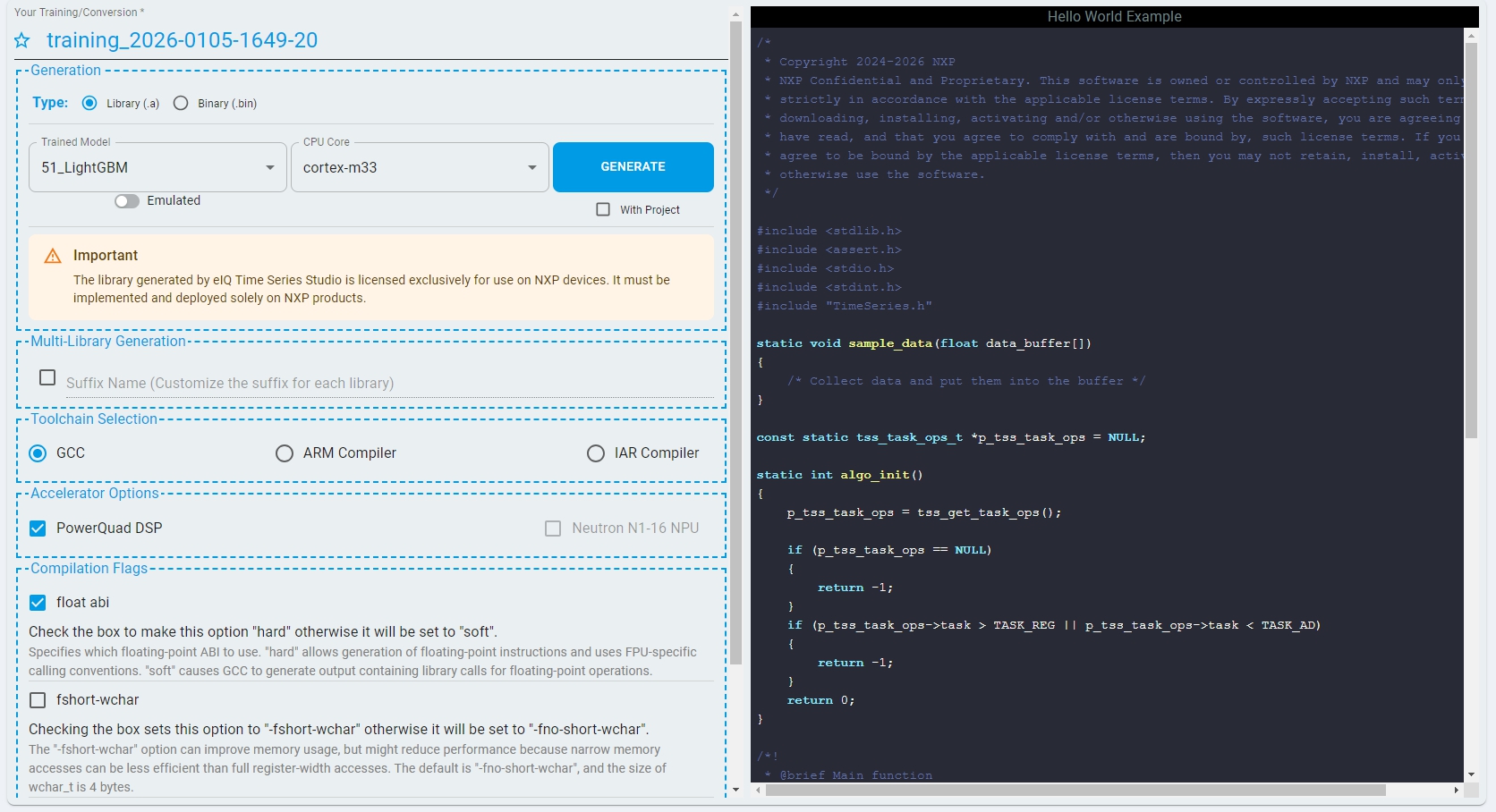

After completing Emulation and identifying the optimal model, switch to the “Deployment” tab to generate and deploy the optimized algorithm. The Deployment process generates the optimized algorithm library or binary along with a sample project, which can then be offloaded to edge devices.

The Deployment section represents the final stage of the pipeline. It provides step-by-step guidance to generate and deploy the algorithm for your project on target devices.

Notes: Deployment requires public network support because the Cloud Server dynamically generates target libraries or binaries specific to your CPU, model, and IDE.

Function Layout

The Deployment section of eIQ Time Series Studio supports the following main functions:

Supported Algorithm Formats

The final deliverable of the algorithm supports two optional formats:

Library(.a or .lib): Updating the algorithm requires relinking and rebuilding the entire firmware project.

Binary(.bin): The algorithm can be updated independently by updating only the corresponding firmware section, without requiring a full firmware upgrade.

Notes: Currently, the binary format is supported only on the FRDM‑MCXN947 target. Support for additional targets will be added in future releases.

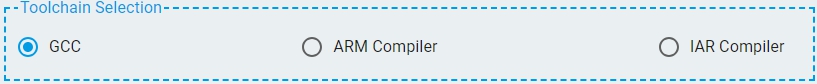

Supported Compilers

eIQ Time Series Studio supports the following compilers:

GCC (MCUXpresso, GNU ARM Embedded Toolchain)

Arm Compiler (Keil MDK)

IAR Compiler (IAR Embedded Workbench For Arm)

CodeWarrior (CodeWarrior Development Studio For DSC)

Supported Architectures

Arm Cortex-M series (widely used in microcontrollers)

NXP DSC cores (Digital Signal Controllers)

Arm Cortex-A series (via GCC)

Supported Compilation Flags

The supported compilation flags are:

float-abi: Specifies which floating-point ABI to use. (hard/soft). Default:

hard.fshort-wchar: Sets the size of wchar_t. Default:

-fno-short-wchar.fshort-enums: Sets the enumeration type to the smallest data type that can hold all enumerator values. Default:

-fshort-enumsfor GCC,-fno-short-enumsfor Arm Compiler.

Notes: Reference from the Arm Compiler Reference Guide.

Supported Accelerator Options

PowerQuad DSP: Accelerates FFT calculation. The target device must contain this hardware.

Neutron NPU: Accelerates deep learning model predictions. The target device must contain this hardware.

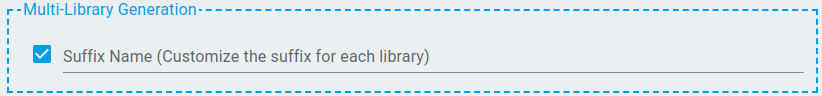

Multi-Library Generation

Multi-Library enables users to link multiple TSS libraries within the same project or device, allowing each library to operate independently. In industrial and home appliance applications, projects often integrate multiple sensor types and models that simultaneously provide independent time series data at different sampling rates.

The Multi-Library feature enables users to deploy dedicated TSS libraries for each independent set of time series data. Each library can incorporate different algorithm types, such as Anomaly Detection, Classification, or Regression. The inference results can be processed independently or combined to support higher-level, system-wide decision-making.

Libraries can be differentiated by customized suffix names in the Deployment section. The suffix is consistently applied to the library file name, header file name, macro definitions, and API symbols declared within the header file. By invoking APIs with different suffix names, the application can explicitly select and use the corresponding TSS library, enabling multiple libraries to coexist within the same project without naming conflicts.

Notes: The generation of sample projects is not supported when the Multi-Library generation is enabled.

Deployment Process

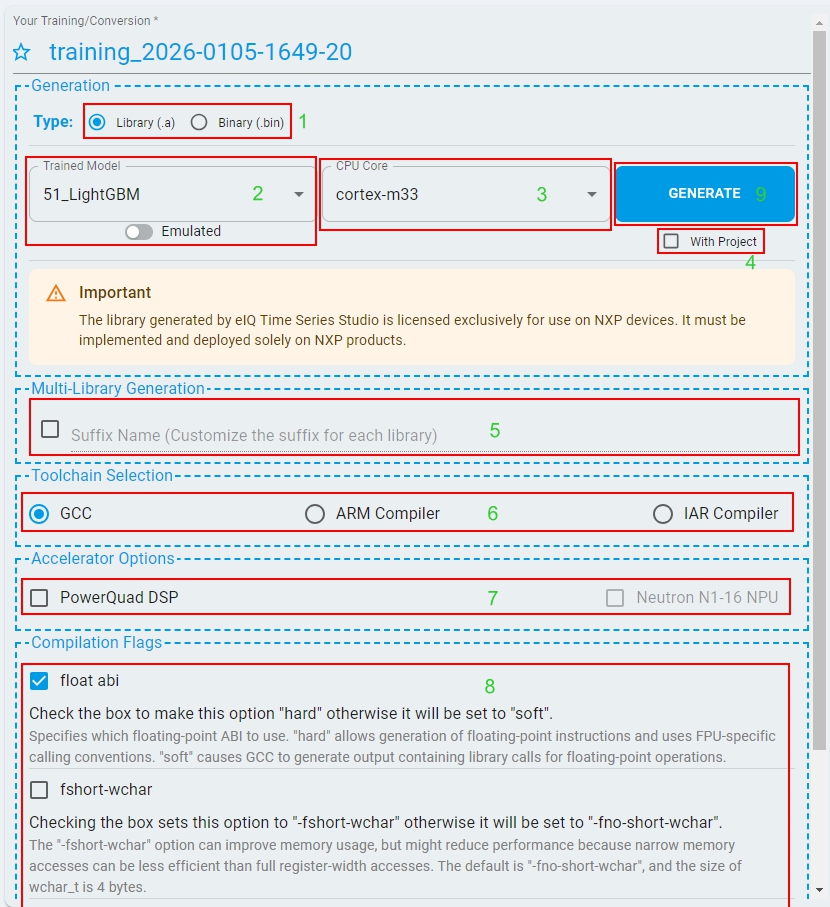

Library Format Deployment Steps

Follow the steps and generate your own time series library and deploy it to your devices:

Select Format: Choose

Libraryas the algorithm format type.Select Model: Pick the best quality model from Emulation process.

Select CPU Core: Choose the

CPU coreof the target board.Project Generation: Decide whether to generate with an MCUXpresso project.

Suffix Name: Set library suffix for Multi-Library projects (optional).

Compiler: Choose compiler (”CodeWarrior” for DSC core).

Accelerator Options: Configure hardware accelerator if supported.

Compilation Flags: Select optimal flags for the compiler.

Generate: Click

GENERATEbutton to create library or project from Cloud.Download: Download and unzip the generated package.

Deploy:

Linkthe library to your project orimportthe generated project to MCUXpresso IDE,buildthe project andflashto the target device.

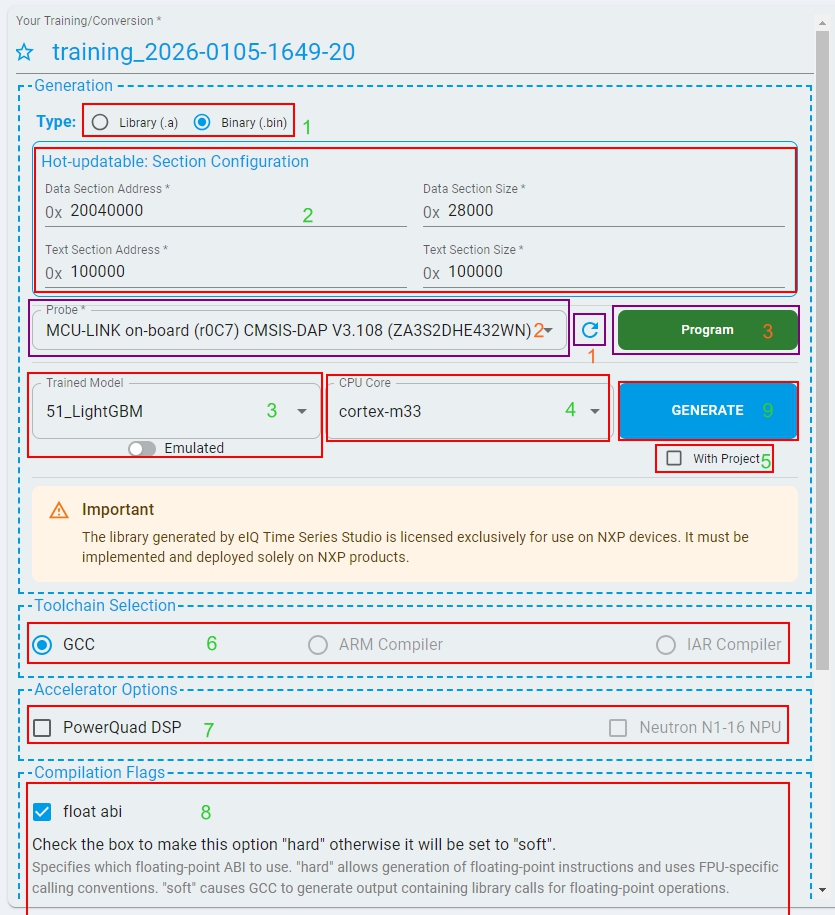

Binary Format Deployment Steps

Follow the steps and generate your own time series binary and deploy it to your devices:

Select Format: Choose

Binaryas the algorithm format type.Configure Section: Set binary configuration parameters for deployment. Ensure these match your firmware project settings.

Select Model: Pick the best quality model from Emulation process.

Select CPU Core: Choose the

CPU coreof the target board.Project Generation: Decide whether to generate with an MCUXpresso project.

Compiler: Choose the compiler. Currently, only “GCC” is supported for binary format.

Accelerator Options: Configure hardware accelerator if supported.

Compilation Flags: Select optimal flags for the compiler.

Generate: Click

GENERATEbutton to create binary or project from Cloud.Download: Download and unzip the generated package.

Deploy:

Flashthe binary to the target device in the designated algorithm firmware section.Importthe generated project to MCUXpresso IDE to know how to use the binary in your application.

LinkServer(inc. CMSIS-DAP) Programming Support

Programming via LinkServer(inc. CMSIS-DAP) is supported. Ensure that the target board is connected to your PC through a LinkServer debugger before proceeding.

Refresh to list the connected LinkServer probe.

Select the desired LinkServer probe from the list.

Click

PROGRAMto flash the binary to the target device in the designated algorithm firmware section.

Algorithm Integration

eIQ Time Series Studio Library/Binary is an algorithm library or binary for edge devices. The time series cloud server dynamically generates embedded C/C++ code and cross-compiles based on your specific hardware and compiler information.

Generated Package Structure

Library Package:

📦_libtss

┣ 📜algorithm.dat

┣ 📜libtss.a or tss.lib

┣ 📜LICENSE.txt

┣ 📜metadata.json

┗ 📜TimeSeries.h

Binary Package:

📦_binary

┣ 📜algorithm.dat

┣ 📜libtss.bin

┣ 📜LICENSE.txt

┣ 📜metadata.json

┗ 📜TimeSeries.h

algorithm.dat: The encrypted file with the algorithm details. NXP cloud server can parse and generate the source code.

libtss.a / tss.lib / libtss.bin: The core algorithm library or binary, developers use it for algorithm integration. (If Arm Compiler or CodeWarrior is selected, the generated library is tss.lib)

LICENSE.txt: NXP online code hosting software license agreement.

metadata.json: The meta description file for the generated algorithm. The file contains key information like compiler type, task type, input dataset, and platform information. The file also contains the minimum memory size as reference.

TimeSeries.h: The API header file for the library or binary, developers use it for the algorithm integration.

Sample Code Integration

The documentation provides sample code examples for integrating the library or binary into your project for different task types (Anomaly detection, n-Class Classification, 1-Class Classification, and Regression).

Sample Code For Library

Anomaly detection

/*

* Copyright 2024-2026 NXP

* NXP Confidential and Proprietary. This software is owned or controlled by NXP and may only be used

* strictly in accordance with the applicable license terms. By expressly accepting such terms or by

* downloading, installing, activating and/or otherwise using the software, you are agreeing that you

* have read, and that you agree to comply with and are bound by, such license terms. If you do not

* agree to be bound by the applicable license terms, then you may not retain, install, activate or

* otherwise use the software.

*/

#include <stdlib.h>

#include <assert.h>

#include <stdio.h>

#include <stdint.h>

#include "TimeSeries.h"

static void sample_data(float data_buffer[])

{

/* Collect data and put them into the buffer */

}

const static tss_task_ops_t *p_tss_task_ops = NULL;

static int algo_init()

{

p_tss_task_ops = tss_get_task_ops();

if (p_tss_task_ops == NULL)

{

return -1;

}

if (p_tss_task_ops->task > TASK_REG || p_tss_task_ops->task < TASK_AD)

{

return -1;

}

return 0;

}

/*!

* @brief Main function

*/

int main(void)

{

tss_status status;

float probability;

assert(algo_init() == 0);

const tss_algo_attribute_t *p_algo_attr = p_tss_task_ops->algo_attribute();

float *p_data = (float *)malloc(p_algo_attr->data_len * p_algo_attr->data_dim * sizeof(float));

assert(p_data != NULL);

if (p_tss_task_ops->task == TASK_AD_ODL)

{

/*

* It's also ok that input pre-learned knowledge stored in p_algo_attr->model_addr.

* Here we use online learning to train the model with normal data samples.

* Input NULL to skip pre-learned knowledge.

*/

status = p_tss_task_ops->ad_odl_ops.init(NULL);

if (status != TSS_SUCCESS)

{

/* Handle the initialization failure cases */

}

/*The learning number is customizable, but we recommend the number greater than TSS_RECOMMEND_LEARNING_SAMPLE_NUM to get better results.*/

int learning_num = p_algo_attr->recommend_learning_num;

for (int i = 0; i < learning_num; i++)

{

sample_data(p_data);

status = p_tss_task_ops->ad_odl_ops.learn(p_data);

if (status != TSS_LEARNING_NOT_ENOUGH && status != TSS_RECOMMEND_LEARNING_DONE)

{

/* Handle the learning failure cases */

}

}

}

else

{

status = p_tss_task_ops->ad_ops.init();

}

if (status != TSS_SUCCESS)

{

/* Handle the initialization failure cases */

}

while (1)

{

sample_data(p_data);

if (p_tss_task_ops->task == TASK_AD_ODL)

{

status = p_tss_task_ops->ad_odl_ops.predict(p_data, &probability);

}

else

{

status = p_tss_task_ops->ad_ops.predict(p_data, &probability);

}

if (status != TSS_SUCCESS)

{

/* Handle the prediction failure cases */

}

/* Handle the prediction result */

}

return 0;

}

n-Class Classification

/*

* Copyright 2024-2026 NXP

* NXP Confidential and Proprietary. This software is owned or controlled by NXP and may only be used

* strictly in accordance with the applicable license terms. By expressly accepting such terms or by

* downloading, installing, activating and/or otherwise using the software, you are agreeing that you

* have read, and that you agree to comply with and are bound by, such license terms. If you do not

* agree to be bound by the applicable license terms, then you may not retain, install, activate or

* otherwise use the software.

*/

#include <stdlib.h>

#include <assert.h>

#include <stdio.h>

#include <stdint.h>

#include "TimeSeries.h"

static void sample_data(float data_buffer[])

{

/* Collect data and put them into the buffer */

}

const static tss_task_ops_t *p_tss_task_ops = NULL;

static int algo_init()

{

p_tss_task_ops = tss_get_task_ops();

if (p_tss_task_ops == NULL)

{

return -1;

}

if (p_tss_task_ops->task > TASK_REG || p_tss_task_ops->task < TASK_AD)

{

return -1;

}

return 0;

}

/*!

* @brief Main function

*/

int main(void)

{

tss_status status;

int class_index;

assert(algo_init() == 0);

const tss_algo_attribute_t *p_algo_attr = p_tss_task_ops->algo_attribute();

float *p_data = (float *)malloc(p_algo_attr->data_len * p_algo_attr->data_dim * sizeof(float));

float *p_result = (float *)malloc(p_algo_attr->target_num * sizeof(float));

assert(p_data != NULL && p_result != NULL);

status = p_tss_task_ops->cls_ops.init();

if (status != TSS_SUCCESS)

{

/* Handle the initialization failure cases */

}

while (1)

{

sample_data(p_data);

status = p_tss_task_ops->cls_ops.predict(p_data, p_result, &class_index);

if (status != TSS_SUCCESS)

{

/* Handle the prediction failure cases */

}

/* Handle the prediction result */

}

return 0;

}

1-Class Classification

/*

* Copyright 2024-2026 NXP

* NXP Confidential and Proprietary. This software is owned or controlled by NXP and may only be used

* strictly in accordance with the applicable license terms. By expressly accepting such terms or by

* downloading, installing, activating and/or otherwise using the software, you are agreeing that you

* have read, and that you agree to comply with and are bound by, such license terms. If you do not

* agree to be bound by the applicable license terms, then you may not retain, install, activate or

* otherwise use the software.

*/

/* Decision score indicating anomaly/outlier status. Positive values (score > 0) indicate normal samples,

* while negative values suggest outliers/anomalies. is_outlier flag is derived by thresholding at 0.

*/

#include <stdlib.h>

#include <assert.h>

#include <stdio.h>

#include <stdint.h>

#include "TimeSeries.h"

static void sample_data(float data_buffer[])

{

/* Collect data and put them into the buffer */

}

const static tss_task_ops_t *p_tss_task_ops = NULL;

static int algo_init()

{

p_tss_task_ops = tss_get_task_ops();

if (p_tss_task_ops == NULL)

{

return -1;

}

if (p_tss_task_ops->task > TASK_REG || p_tss_task_ops->task < TASK_AD)

{

return -1;

}

return 0;

}

/*!

* @brief Main function

*/

int main(void)

{

tss_status status;

int is_outlier;

float score;

assert(algo_init() == 0);

const tss_algo_attribute_t *p_algo_attr = p_tss_task_ops->algo_attribute();

float *p_data = (float *)malloc(p_algo_attr->data_len * p_algo_attr->data_dim * sizeof(float));

assert(p_data != NULL);

status = p_tss_task_ops->oc_ops.init();

if (status != TSS_SUCCESS)

{

/* Handle the initialization failure cases */

}

while (1)

{

sample_data(p_data);

status = p_tss_task_ops->oc_ops.predict(p_data, &score, &is_outlier);

if (status != TSS_SUCCESS)

{

/* Handle the prediction failure cases */

}

/* Handle the prediction result */

}

return 0;

}

Regression

/*

* Copyright 2024-2026 NXP

* NXP Confidential and Proprietary. This software is owned or controlled by NXP and may only be used

* strictly in accordance with the applicable license terms. By expressly accepting such terms or by

* downloading, installing, activating and/or otherwise using the software, you are agreeing that you

* have read, and that you agree to comply with and are bound by, such license terms. If you do not

* agree to be bound by the applicable license terms, then you may not retain, install, activate or

* otherwise use the software.

*/

#include <stdlib.h>

#include <assert.h>

#include <stdio.h>

#include <stdint.h>

#include "TimeSeries.h"

static void sample_data(float data_buffer[])

{

/* Collect data and put them into the buffer */

}

const static tss_task_ops_t *p_tss_task_ops = NULL;

static int algo_init()

{

p_tss_task_ops = tss_get_task_ops();

if (p_tss_task_ops == NULL)

{

return -1;

}

if (p_tss_task_ops->task > TASK_REG || p_tss_task_ops->task < TASK_AD)

{

return -1;

}

return 0;

}

/*!

* @brief Main function

*/

int main(void)

{

tss_status status;

assert(algo_init() == 0);

const tss_algo_attribute_t *p_algo_attr = p_tss_task_ops->algo_attribute();

float *p_data = (float *)malloc(p_algo_attr->data_len * p_algo_attr->data_dim * sizeof(float));

float *p_targets = (float *)malloc(p_algo_attr->target_num * sizeof(float));

assert(p_data != NULL && p_targets != NULL);

status = p_tss_task_ops->reg_ops.init();

if (status != TSS_SUCCESS)

{

/* Handle the initialization failure cases */

}

while (1)

{

sample_data(p_data);

status = p_tss_task_ops->reg_ops.predict(p_data, p_targets);

if (status != TSS_SUCCESS)

{

/* Handle the prediction failure cases */

}

/* Handle the prediction result */

}

return 0;

}

Sample Code For Binary

Anomaly detection

/*

* Copyright 2024-2026 NXP

* NXP Confidential and Proprietary. This software is owned or controlled by NXP and may only be used

* strictly in accordance with the applicable license terms. By expressly accepting such terms or by

* downloading, installing, activating and/or otherwise using the software, you are agreeing that you

* have read, and that you agree to comply with and are bound by, such license terms. If you do not

* agree to be bound by the applicable license terms, then you may not retain, install, activate or

* otherwise use the software.

*/

#include <stdlib.h>

#include <assert.h>

#include <stdio.h>

#include <stdint.h>

#include "TimeSeries.h"

static void sample_data(float data_buffer[])

{

/* Collect data and put them into the buffer */

}

const static tss_task_ops_t *p_tss_task_ops = NULL;

typedef struct tss_binary_header

{

char magic[8]; /* "TSS1.5.0" */

const tss_task_ops_t *(*get_task_ops)(void);

} tss_binary_header_t;

static int algo_init()

{

tss_binary_header_t* (*entry)(void);

extern uint32_t __base_BINARY_FLASH;

entry = (tss_binary_header_t* (*)(void))(uintptr_t)(&__base_BINARY_FLASH + 1);

tss_binary_header_t *p_algo_head = entry();

printf("MAGIC NUMBER:");

int i = 0;

for(i = 0; i < 8; i++)

{

printf("%c", p_algo_head->magic[i]);

}

p_tss_task_ops = p_algo_head->get_task_ops();

if (p_tss_task_ops == NULL)

{

return -1;

}

if (p_tss_task_ops->task > TASK_REG || p_tss_task_ops->task < TASK_AD)

{

return -1;

}

return 0;

}

/*!

* @brief Main function

*/

int main(void)

{

tss_status status;

float probability;

assert(algo_init() == 0);

const tss_algo_attribute_t *p_algo_attr = p_tss_task_ops->algo_attribute();

float *p_data = (float *)malloc(p_algo_attr->data_len * p_algo_attr->data_dim * sizeof(float));

assert(p_data != NULL);

if (p_tss_task_ops->task == TASK_AD_ODL)

{

/*

* It's also ok that input pre-learned knowledge stored in p_algo_attr->model_addr.

* Here we use online learning to train the model with normal data samples.

* Input NULL to skip pre-learned knowledge.

*/

status = p_tss_task_ops->ad_odl_ops.init(NULL);

if (status != TSS_SUCCESS)

{

/* Handle the initialization failure cases */

}

/*The learning number is customizable, but we recommend the number greater than TSS_RECOMMEND_LEARNING_SAMPLE_NUM to get better results.*/

int learning_num = p_algo_attr->recommend_learning_num;

for (int i = 0; i < learning_num; i++)

{

sample_data(p_data);

status = p_tss_task_ops->ad_odl_ops.learn(p_data);

if (status != TSS_LEARNING_NOT_ENOUGH && status != TSS_RECOMMEND_LEARNING_DONE)

{

/* Handle the learning failure cases */

}

}

}

else

{

status = p_tss_task_ops->ad_ops.init();

}

if (status != TSS_SUCCESS)

{

/* Handle the initialization failure cases */

}

while (1)

{

sample_data(p_data);

if (p_tss_task_ops->task == TASK_AD_ODL)

{

status = p_tss_task_ops->ad_odl_ops.predict(p_data, &probability);

}

else

{

status = p_tss_task_ops->ad_ops.predict(p_data, &probability);

}

if (status != TSS_SUCCESS)

{

/* Handle the prediction failure cases */

}

/* Handle the prediction result */

}

return 0;

}

n-Class Classification

/*

* Copyright 2024-2026 NXP

* NXP Confidential and Proprietary. This software is owned or controlled by NXP and may only be used

* strictly in accordance with the applicable license terms. By expressly accepting such terms or by

* downloading, installing, activating and/or otherwise using the software, you are agreeing that you

* have read, and that you agree to comply with and are bound by, such license terms. If you do not

* agree to be bound by the applicable license terms, then you may not retain, install, activate or

* otherwise use the software.

*/

#include <stdlib.h>

#include <assert.h>

#include <stdio.h>

#include <stdint.h>

#include "TimeSeries.h"

static void sample_data(float data_buffer[])

{

/* Collect data and put them into the buffer */

}

const static tss_task_ops_t *p_tss_task_ops = NULL;

typedef struct tss_binary_header

{

char magic[8]; /* "TSS1.5.0" */

const tss_task_ops_t *(*get_task_ops)(void);

} tss_binary_header_t;

static int algo_init()

{

tss_binary_header_t* (*entry)(void);

extern uint32_t __base_BINARY_FLASH;

entry = (tss_binary_header_t* (*)(void))(uintptr_t)(&__base_BINARY_FLASH + 1);

tss_binary_header_t *p_algo_head = entry();

printf("MAGIC NUMBER:");

int i = 0;

for(i = 0; i < 8; i++)

{

printf("%c", p_algo_head->magic[i]);

}

p_tss_task_ops = p_algo_head->get_task_ops();

if (p_tss_task_ops == NULL)

{

return -1;

}

if (p_tss_task_ops->task > TASK_REG || p_tss_task_ops->task < TASK_AD)

{

return -1;

}

return 0;

}

/*!

* @brief Main function

*/

int main(void)

{

tss_status status;

int class_index;

assert(algo_init() == 0);

const tss_algo_attribute_t *p_algo_attr = p_tss_task_ops->algo_attribute();

float *p_data = (float *)malloc(p_algo_attr->data_len * p_algo_attr->data_dim * sizeof(float));

float *p_result = (float *)malloc(p_algo_attr->target_num * sizeof(float));

assert(p_data != NULL && p_result != NULL);

status = p_tss_task_ops->cls_ops.init();

if (status != TSS_SUCCESS)

{

/* Handle the initialization failure cases */

}

while (1)

{

sample_data(p_data);

status = p_tss_task_ops->cls_ops.predict(p_data, p_result, &class_index);

if (status != TSS_SUCCESS)

{

/* Handle the prediction failure cases */

}

/* Handle the prediction result */

}

return 0;

}

1-Class Classification

/*

* Copyright 2024-2026 NXP

* NXP Confidential and Proprietary. This software is owned or controlled by NXP and may only be used

* strictly in accordance with the applicable license terms. By expressly accepting such terms or by

* downloading, installing, activating and/or otherwise using the software, you are agreeing that you

* have read, and that you agree to comply with and are bound by, such license terms. If you do not

* agree to be bound by the applicable license terms, then you may not retain, install, activate or

* otherwise use the software.

*/

/* Decision score indicating anomaly/outlier status. Positive values (score > 0) indicate normal samples,

* while negative values suggest outliers/anomalies. is_outlier flag is derived by thresholding at 0.

*/

#include <stdlib.h>

#include <assert.h>

#include <stdio.h>

#include <stdint.h>

#include "TimeSeries.h"

static void sample_data(float data_buffer[])

{

/* Collect data and put them into the buffer */

}

const static tss_task_ops_t *p_tss_task_ops = NULL;

typedef struct tss_binary_header

{

char magic[8]; /* "TSS1.5.0" */

const tss_task_ops_t *(*get_task_ops)(void);

} tss_binary_header_t;

static int algo_init()

{

tss_binary_header_t* (*entry)(void);

extern uint32_t __base_BINARY_FLASH;

entry = (tss_binary_header_t* (*)(void))(uintptr_t)(&__base_BINARY_FLASH + 1);

tss_binary_header_t *p_algo_head = entry();

printf("MAGIC NUMBER:");

int i = 0;

for(i = 0; i < 8; i++)

{

printf("%c", p_algo_head->magic[i]);

}

p_tss_task_ops = p_algo_head->get_task_ops();

if (p_tss_task_ops == NULL)

{

return -1;

}

if (p_tss_task_ops->task > TASK_REG || p_tss_task_ops->task < TASK_AD)

{

return -1;

}

return 0;

}

/*!

* @brief Main function

*/

int main(void)

{

tss_status status;

int is_outlier;

float score;

assert(algo_init() == 0);

const tss_algo_attribute_t *p_algo_attr = p_tss_task_ops->algo_attribute();

float *p_data = (float *)malloc(p_algo_attr->data_len * p_algo_attr->data_dim * sizeof(float));

assert(p_data != NULL);

status = p_tss_task_ops->oc_ops.init();

if (status != TSS_SUCCESS)

{

/* Handle the initialization failure cases */

}

while (1)

{

sample_data(p_data);

status = p_tss_task_ops->oc_ops.predict(p_data, &score, &is_outlier);

if (status != TSS_SUCCESS)

{

/* Handle the prediction failure cases */

}

/* Handle the prediction result */

}

return 0;

}

Regression

/*

* Copyright 2024-2026 NXP

* NXP Confidential and Proprietary. This software is owned or controlled by NXP and may only be used

* strictly in accordance with the applicable license terms. By expressly accepting such terms or by

* downloading, installing, activating and/or otherwise using the software, you are agreeing that you

* have read, and that you agree to comply with and are bound by, such license terms. If you do not

* agree to be bound by the applicable license terms, then you may not retain, install, activate or

* otherwise use the software.

*/

#include <stdlib.h>

#include <assert.h>

#include <stdio.h>

#include <stdint.h>

#include "TimeSeries.h"

static void sample_data(float data_buffer[])

{

/* Collect data and put them into the buffer */

}

const static tss_task_ops_t *p_tss_task_ops = NULL;

typedef struct tss_binary_header

{

char magic[8]; /* "TSS1.5.0" */

const tss_task_ops_t *(*get_task_ops)(void);

} tss_binary_header_t;

static int algo_init()

{

tss_binary_header_t* (*entry)(void);

extern uint32_t __base_BINARY_FLASH;

entry = (tss_binary_header_t* (*)(void))(uintptr_t)(&__base_BINARY_FLASH + 1);

tss_binary_header_t *p_algo_head = entry();

printf("MAGIC NUMBER:");

int i = 0;

for(i = 0; i < 8; i++)

{

printf("%c", p_algo_head->magic[i]);

}

p_tss_task_ops = p_algo_head->get_task_ops();

if (p_tss_task_ops == NULL)

{

return -1;

}

if (p_tss_task_ops->task > TASK_REG || p_tss_task_ops->task < TASK_AD)

{

return -1;

}

return 0;

}

/*!

* @brief Main function

*/

int main(void)

{

char ch;

tss_status status;

float probability;

assert(algo_init() == 0);

const tss_algo_attribute_t *p_algo_attr = p_tss_task_ops->algo_attribute();

float *p_data = (float *)malloc(p_algo_attr->data_len * p_algo_attr->data_dim * sizeof(float));

float *p_targets = (float *)malloc(p_algo_attr->target_num * sizeof(float));

assert(p_data != NULL && p_targets != NULL);

status = p_tss_task_ops->reg_ops.init();

if (status != TSS_SUCCESS)

{

/* Handle the initialization failure cases */

}

while (1)

{

sample_data(p_data);

status = p_tss_task_ops->reg_ops.predict(p_data, p_targets);

if (status != TSS_SUCCESS)

{

/* Handle the prediction failure cases */

}

/* Handle the prediction result */

}

return 0;

}

Notes: The above example is a “Hello World” level code to illustrate the use of the algorithm library or binary for different tasks.

API Reference

Notes: The APIs are changed from v1.5 to support both library format and binary format.

Files

TimeSeries.h: The API header file for the library or binary, developers use it for the algorithm integration.

#include "TimeSeries.h"

Enumerations

| name | description | |

|---|---|---|

| typedef | tss_status | Status codes returned from functions in the library or binary |

| typedef | task_type_t | Algorithm task type |

typedef enum

{

TSS_SUCCESS = 0, /* No error */

TSS_STATE_ERROR = 1, /* State is incorrect */

TSS_BOARD_ERROR = 2, /* Board information is incorrect */

TSS_MEMORY_ERROR = 3, /* Memory error caused by the HEAP Overflow */

TSS_PREDICT_NOT_ENABLED = 4, /* Predict function is not enabled */

TSS_LEARNING_ERROR = 5, /* Errors occurs during the learning process */

TSS_LEARNING_NOT_ENOUGH = 6, /* Not enough calls to learning */

TSS_RECOMMEND_LEARNING_DONE = 7, /* Reached the recommended calls to learning */

TSS_NOT_READY = 8, /* Function is not ready but planed to support */

TSS_LICENSE_ERROR = 9, /* Invalid license */

TSS_UNKNOWN_ERROR = 10, /* Unknown error */

} tss_status;

typedef enum {

TASK_AD = 1,

TASK_AD_ODL = 2,

TASK_CLS = 3,

TASK_OC = 4,

TASK_REG = 5

} task_type_t;

Structures

| name | description | |

|---|---|---|

| typedef | tss_algo_attribute_t | Algorithm attributes |

| typedef | tss_ad_task_ops_t | Operations for anomaly detection task |

| typedef | tss_ad_odl_task_ops_t | Operations for anomaly detection task with on-device-learning |

| typedef | tss_cls_task_ops_t | Operations for n-classification task |

| typedef | tss_oc_task_ops_t | Operations for one-class classification task |

| typedef | tss_reg_task_ops_t | Operations for regression task |

| typedef | tss_task_ops_t | Task-specific operations union |

typedef struct {

unsigned int data_tab; /* Input data is tabular data, or not */

unsigned int data_len; /* The length of the input data */

unsigned int data_dim; /* The dimension of the input data */

unsigned int model_size; /* The size of the model buffer */

const float *model_addr; /* The address of model buffer that stores the model hyper-parameters */

unsigned int target_num; /* The number fo targets or classes */

float recommend_threshold; /* The recommended threshold */

const char *lib_id; /* Identifier for the library or binary*/

const char *neutron_version; /* The software version of neutron, if supported*/

unsigned int recommend_learning_num; /*The recommended number of learning */

unsigned char odl_supported; /* On-Device-Learning is supported, or not*/

unsigned char neutron_enabled; /* Neutron is enabled, or not */

unsigned char powerquad_enabled; /* PowerQuad is enabled, or not */

} tss_algo_attribute_t;

typedef struct tss_ad_task_ops

{

/**

* @brief Initialize the anomaly detection algorithm.

* @retval TSS_SUCCESS - Initialization succeed.

*/

tss_status (*init)(void);

/**

* @brief Predict the normal probability of the specified data.

* @param[in] data_input - The data input for the prediction.

* @param[out] probability - The predicted probability output.

* @retval TSS_SUCCESS - Prediction succeed.

* @retval TSS_PREDICT_NOT_ENABLED - Model not ready for prediction.

*/

tss_status (*predict)(const float data_input[], float *probability);

} tss_ad_task_ops_t;

typedef struct tss_ad_odl_task_ops

{

/**

* @brief Initialize the anomaly detection algorithm with the specified model.

* @param[in] model_buffer - The model buffer for initialization, NULL means reset the model.

* @retval TSS_SUCCESS - Initialization succeed.

*/

tss_status (*init)(const float model_buffer[]);

/**

* @brief Learn new model from the normal data.

* @param[in] data_input - The data input for the learning.

* @retval TSS_SUCCESS - Learning succeed

* @retval TSS_LEARNING_NOT_ENOUGH - More data needed for the learning.

*/

tss_status (*learn)(const float data_input[]);

/**

* @brief Export the model to the specified buffer.

* @param[out] model_buffer - The exported model buffer.

* @retval TSS_SUCCESS - Export succeed.

* @retval TSS_PREDICT_NOT_ENABLED - Model not ready for export.

*/

tss_status (*export)(float model_buffer[]);

/**

* @brief Predict the normal probability of the specified data.

* @param[in] data_input - The data input for the prediction.

* @param[out] probability - The predicted probability output.

* @retval TSS_SUCCESS - Prediction succeed.

* @retval TSS_PREDICT_NOT_ENABLED - Model not ready for prediction.

*/

tss_status (*predict)(const float data_input[], float *probability);

} tss_ad_odl_task_ops_t;

typedef struct tss_cls_task_ops

{

/**

* @brief Initialize the classification algorithm.

* @retval TSS_SUCCESS - Initialization succeed.

*/

tss_status (*init)(void);

/**

* @brief Predict the probabilities and class index of the specified data.

* @param[in] data_input - The data input for the prediction.

* @param[out] probabilities - The predicted probabilities of all classes

* @param[out] class_index - The predicted class index.

* @retval TSS_SUCCESS - Prediction succeed.

*/

tss_status (*predict)(const float data_input[], float probabilities[], int *class);

} tss_cls_task_ops_t;

typedef struct tss_oc_task_ops

{

/**

* @brief Initialize the one class algorithm.

* @retval TSS_SUCCESS - Initialization succeed.

*/

tss_status (*init)(void);

/**

* @brief Predict if is outlier and the decision score of the specified data.

* @param[in] data_input - The data input for the prediction.

* @param[out] is_outlier - The predicted results of if input is outlier.

* @param[out] score - The predicted score.

* @retval TSS_SUCCESS - Prediction succeed.

*/

tss_status (*predict)(const float data_input[], float *score, int *is_outlier);

} tss_oc_task_ops_t;

typedef struct tss_reg_task_ops

{

/**

* @brief Initialize the regression algorithm.

* @retval TSS_SUCCESS - Initialization succeed.

*/

tss_status (*init)(void);

/**

* @brief Predict the targets based on the specified data.

* @param[in] data_input - The data input for the prediction.

* @param[out] targets - The predicted targets output.

* @retval TSS_SUCCESS - Prediction succeed.

*/

tss_status (*predict)(const float data_input[], float targets[]);

} tss_reg_task_ops_t;

typedef struct tss_task_ops

{

int task;

union

{

tss_ad_task_ops_t ad_ops;

tss_ad_odl_task_ops_t ad_odl_ops;

tss_cls_task_ops_t cls_ops;

tss_oc_task_ops_t oc_ops;

tss_reg_task_ops_t reg_ops;

};

const tss_algo_attribute_t *(*algo_attribute)(void);

} tss_task_ops_t;

Functions

The generated algorithm provides the following main functions:

tss_get_task_ops()

const tss_task_ops_t* tss_get_task_ops(void);

Description: Returns a pointer to the task operations structure for the generated library.

Returns: Pointer to tss_task_ops_t structure containing task-specific operations and attributes.

Usage: This is the primary entry point for library format integration. Call this function during initialization to obtain access to all algorithm operations.

Example:

const tss_task_ops_t *p_tss_task_ops = tss_get_task_ops();

if (p_tss_task_ops == NULL) {

// Handle error

}

Task-Specific Operations

Based on the task type, access the appropriate operations through the returned structure:

Anomaly Detection:

p_tss_task_ops->ad_opsAnomaly Detection with ODL:

p_tss_task_ops->ad_odl_opsClassification:

p_tss_task_ops->cls_opsOne-Class Classification:

p_tss_task_ops->oc_opsRegression:

p_tss_task_ops->reg_ops

algo_attribute()

const tss_algo_attribute_t* (*algo_attribute)(void);

Description: Retrieves algorithm attributes including data dimensions, model size, and hardware acceleration status.

Returns: Pointer to tss_algo_attribute_t structure.

Example:

const tss_algo_attribute_t *p_algo_attr = p_tss_task_ops->algo_attribute();

printf("Data length: %u, Data dimension: %u\n",

p_algo_attr->data_len, p_algo_attr->data_dim);

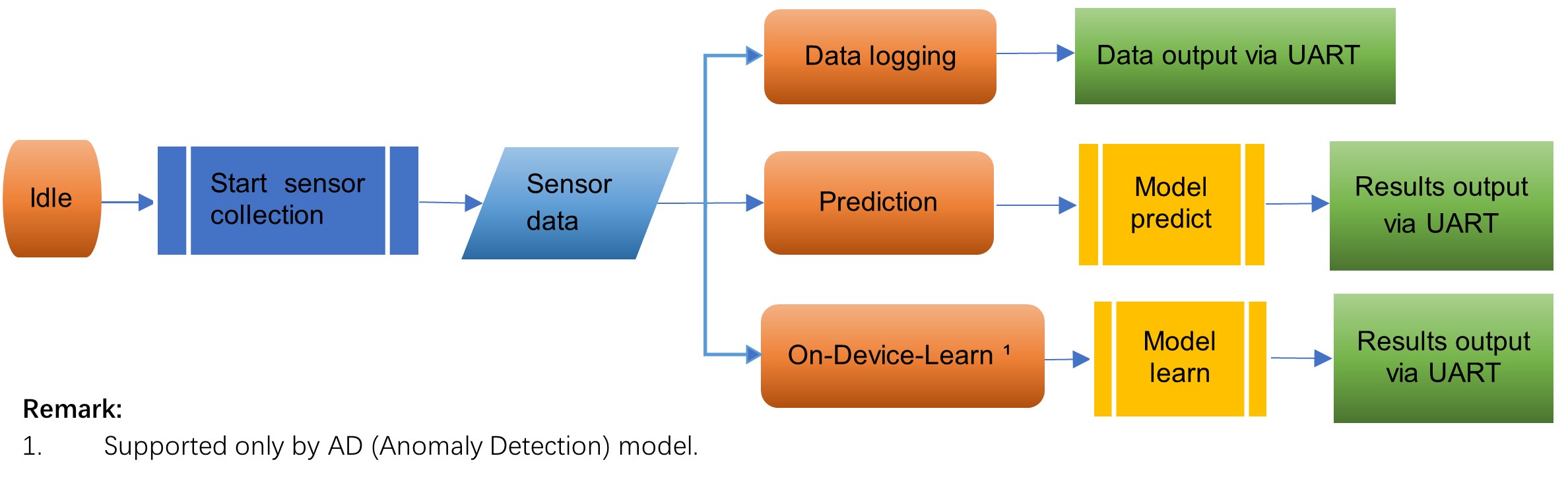

Algorithm Project Execution

eIQ Time Series Studio helps generate the sample project, which can be directly imported in the MCUXpresso IDE. The sample project contains a generated algorithm library or binary, time series framework, your target project driver, settings, and components. You can take the sample project as a QuickStart with your own library or binary.

Sample project workflow overview:

Idle state. Ready to handle the SHELL commands and collect the sensor data by the sensor HAL in time series framework.

Data log state. Print the sensor data via SHELL over UART.

Prediction state. Predict based on the sensor data by the generated model algorithm and print the results via SHELL over UART.

On-device-Learn state. Learn a new model from the sensor data by the generated model algorithm and print the results via SHELL over UART.

Sample Projects key features:

QuickStart with your algorithm on the target Edge Device.

User-friendly SHELL in the serial terminal, start the learning/prediction process with commands.

Provides an option to log the data when the target has an embedded sensor (accelerator).

To test the algorithm without a physical sensor as the data input, use a dummy sensor HAL in the Time Series framework.

Based on the MCX-N9XX-EVK, the file tree of the generated sample project is shown as below.

📦MCX-N9XX-EVK <Debug_lib> Choose the build option for project with library or binary.

┣ 📂Framework time series framework based on the FreeRTOS.

┃ ┣ 📂core

┃ ┣ 📂docs

┃ ┣ 📂hal Hardware Abstraction Layer.

┃ ┃ ┣ 📂inc

┃ ┣ 📂inc

┗ 📂MCX-N9XX-EVK Base Project.

┃ ┣ 📂CMSIS

┃ ┣ 📂board

┃ ┣ 📂component

┃ ┣ 📂device

┃ ┣ 📂drivers

┃ ┣ 📂freertos

┃ ┣ 📂source

┃ ┃ ┣ 📂TimeSeries Generated time series library or binary.

┃ ┃ ┣ 📜FreeRTOSConfig.h FreeRTOS config header file.

┃ ┃ ┣ 📜cpp_config.cpp

┃ ┃ ┣ 📜hardware.c Config file of the hardware/sensors.

┃ ┃ ┣ 📜lptmr.c

┃ ┃ ┣ 📜lptmr.h

┃ ┃ ┣ 📜main.c Main program.

┃ ┃ ┣ 📜memory.c

┃ ┃ ┗ 📜semihost_hardfault.c

┃ ┣ 📂startup

┃ ┣ 📂usb

┃ ┣ 📂utilities

┃ ┣ 📜.cproject

┃ ┣ 📜.gitignore

┃ ┣ 📜.project

┃ ┗ 📜README.pdf User Guide of the sample project.

SHELL Interface

After clicking GENERATE with the project option and completing the build-flash steps, the time series sample project flashes directly into your device. Open the Serial Terminal and start the execution:

The command-line interface is straightforward as reflected in the output from help:

"help": List all the registered commands

"exit": Exit program

"start <log|learn|pre>: Start an execution

- log: Start data logger

- learn: Start time series learning

- reset: Start time series knowledge reset

- pre: Start time series prediction

"stop": Stop ongoing execution

"setup <odr|fsr|sc|thold> <value>: Setup a parameter

- odr: Setup output data rate

- fsr: Setup full scale range

- sc: Setup sample count

- thold: Setup prediction threshold (Anomaly Detection Only)

"loadlib <load|alloc> <allocsize | line>: dynamic load a library to allocated memory

- alloc size: allocate memory of size bytes

- load line: load one line of library to memory

"get <info|state|range>: Get board information

- info: Get system information

- state: Get current Time Series state

- range: Get parameter range