PyTorch Models¶

eIQ AI Toolkit enables the deployment of machine learning models on devices by providing tools to convert or quantize the models into formats supported by the device’s neural processing unit (NPU). If you haven’t done so already, refer to our Conversion and Quantization guide, which explains how to perform each conversion step.

Machine learning engineers typically train models using frameworks such as PyTorch or TensorFlow. To run these models on NXP devices, they must be converted into a quantized TFLite format.

This guide demonstrates how to combine individual conversion steps into a single call using the eIQ AI Toolkit API.

Please note that the eIQ AI Toolkit backend must be running. If you haven’t set it up yet, follow the tutorial: eIQ AI Toolkit setup & launch

[ ]:

import requests

import sys

from pathlib import Path

# Set your eIQ AI Toolkit url:

AI_TOOLKIT_BACKEND_URL = "http://localhost:8000"

Conversion from PyTorch¶

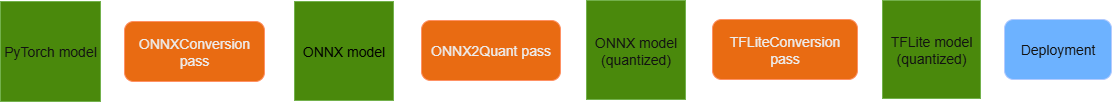

Converting a PyTorch model to a quantized TF Lite format involves multiple steps. The diagram below illustrates the inputs and outputs for each step.

To summarize, the process involves three main steps:

Convert the PyTorch model to ONNX format.

Quantize the ONNX model.

Convert the quantized ONNX model to TFLite format.

In the following sections, this guide will show you how to combine all these steps and execute them with a single endpoint call.

If your model is already in a format that matches the output of one of these steps, you can skip the preceding steps and run only the remaining parts of the pipeline. For example, if you already have an ONNX model, you only need to perform quantization and conversion to TF Lite.

More detailed explanations of the three steps can also be found in the following guides:

Next, we will prepare a PyTorch model and run the full pipeline to convert it into a quantized TF Lite model.

1. Prepare model¶

If you already have a model, update the path to point to its location. If you don’t have a model yet, set the path to the location where the model will be saved. (See the following sections for instructions on downloading a sample model.)

[ ]:

# Modify the path to your PyTorch model

model_path = Path("path_to_pytorch_model.zip")

Use the following script to download the example model:

Note: Skip this step if you already have your own model.

[ ]:

example_model_url = "https://eiq.nxp.com/training-materials/_misc/models/model.zip"

with open(model_path, "wb") as f:

response = requests.get(

url=example_model_url

)

f.write(response.content)

2. Upload metadata and model file¶

[ ]:

# Upload metadata

model_name = "PyTorch model"

# Define inputs metadata

inputs = [

{

"name": "images",

"shape": [1,1,49,10],

"type": "float32"

}

]

# Define outputs metadata

outputs = [{"name": "y"}]

MODELS_API_URL = f"{AI_TOOLKIT_BACKEND_URL}/models"

# Full model metadata

model_metadata = {

"model_type": "pytorch",

"io_config": {

"input_config": inputs,

"output_config": outputs,

}

}

# Send request to upload metadata

response = requests.post(MODELS_API_URL, json=model_metadata)

response_data = response.json()

model_uuid = response_data["data"]["model"]["uuid"]

[ ]:

# Upload model file

with open(model_path, "rb") as zip_file:

files = {

"model_file": ("model.zip", zip_file, "application/zip")

}

response = requests.post(url=f"{AI_TOOLKIT_BACKEND_URL}/models/{model_uuid}", # Model identifier is part of the request URL

files=files)

if response.status_code == 200:

response_data = response.json()

print(f"Pytorch model named '{model_name}' has been successfully uploaded!")

else:

print("Something went wrong while uploading the model: \n\n", file=sys.stderr)

print(response.text, file=sys.stderr)

After uploading the model metadata and file, you can verify its registration and readiness status using the following endpoint. If the status remains in_progress, run the check multiple times until it changes to ready.

[ ]:

response = requests.get(f"{AI_TOOLKIT_BACKEND_URL}/models/{model_uuid}")

data = response.json()

print(f'Model status: {data["data"]["model"]["status"]}')

print(f'Model status description: {data["data"]["model"]["status_description"]}')

3. Prepare calibration dataset for ONNX quantization¶

If you already have a dataset ready, simply update the path to point to its location. If you don’t have a dataset yet, set the path to a location where the dataset should be saved. (See the following sections for instructions on how to download a sample dataset.)

[ ]:

# Modify the path if you already have a dataset

dataset_path = Path("path_to_calibration_dataset.zip")

Use the following script to download the example dataset:

Note: Skip this step if you already have your own dataset.

[ ]:

example_dataset_url = "https://eiq.nxp.com/training-materials/_misc/datasets/kws_calib.zip"

with open(dataset_path, "wb") as f:

response = requests.get(

url=example_dataset_url

)

f.write(response.content)

4. Upload calibration dataset¶

[ ]:

DATASETS_API_URL = f"{AI_TOOLKIT_BACKEND_URL}/datasets"

uploaded_dataset_name = "My calibration dataset"

with dataset_path.open("rb") as zip_file:

files = {

"dataset_file": ("dataset.zip", zip_file, "application/zip")

}

data = {

"dataset_name": uploaded_dataset_name,

"dataset_type": "calibration"

}

response = requests.post(DATASETS_API_URL, files=files, data=data)

response_data = response.json()

dataset_uuid = response_data["data"]['dataset']['uuid']

After uploading dataset, you can check its status. Run the cell multiple times until status is set to ready.

[ ]:

response = requests.get(f"{DATASETS_API_URL}/{dataset_uuid}")

data = response.json()

print(f'Dataset status: {data["data"]["dataset"]["status"]}')

print(f'Dataset status description: {data["data"]["dataset"]["status_description"]}')

5. Run conversion and quantization pipeline¶

To execute multiple passes in sequence, use the /optimizations/run endpoint and include in the request body a list of passes arranged in the exact order they should run. In our case:

First, convert the PyTorch model to ONNX format. Next, perform ONNX quantization. Finally, convert the quantized model to TFLite format.

Each step processes the output of the previous step, ensuring a smooth conversion pipeline.

[ ]:

OPTIMIZATIONS_API_URL = f"{AI_TOOLKIT_BACKEND_URL}/optimizations"

RUN_OPTIMIZATION_API_URL = f"{OPTIMIZATIONS_API_URL}/run"

pass_config = {

"model_uuid": model_uuid,

"passes": [

{

"type": "OnnxConversion",

"config": {

"target_opset": 14,

"optimize": True

}

},

{

"type": "ONNX2Quant",

"config": {

"dataset_uuid": dataset_uuid,

}

},

{

"type": "TFLiteConversion",

"config": {

}

}

]

}

optimization_response = requests.post(RUN_OPTIMIZATION_API_URL, json=pass_config)

data = optimization_response.json()

optimization_uuid = data["data"]["optimization"]["uuid"]

Running the code above starts the conversion. To check the status, use the following endpoint. Again, you may need to run it multiple times until status changes to success:

[ ]:

response = requests.get(f"{OPTIMIZATIONS_API_URL}/{optimization_uuid}")

data = response.json()

status = data["data"]["optimization"]["status"]

print(f"Conversion status: {status}")

# Get ID of the last artifact, that is the quantized TFLite model

# If you want to download intermediate results, change index -1 to appropriate index

if status == "success":

artifact_id = data["data"]["optimization"]["artifacts"][-1]["artifact_id"]

6. Download converted model¶

[ ]:

# Change model path to your location

dest_model_path = Path("my_path_to_converted_model.tflite")

[ ]:

download_response = requests.get(f"{AI_TOOLKIT_BACKEND_URL}/optimizations/{optimization_uuid}/resources/{artifact_id}")

with dest_model_path.open("wb") as f:

f.write(download_response.content)