eIQ Watermarking Extension¶

Unlike ordinary software, Machine Learning (ML) models are not copyright-protected by default. This is because an ML model is created by a computer and not the result of a human creative act; see our whitepaper for more details An additional challenge is that proving unauthorized copying of a model is a non-trivial task. Note that a copyist can easily avoid using a precise copy by, for instance, performing a few training steps.

The watermarking tool for the eIQ® AI toolkit addresses both concerns: it adds a piece of copyright-protected information to an ML model to strengthen the copyright claim, and it provides a means to prove unauthorized copying. It does so without affecting the performance of the model, in neither accuracy, size, or speed. It is a method to protect your valuable ML model at no performance cost.

To further emphasize the importance of adding copyright protection to a model. Compared to ordinary software, ML allows for an additional attack vector for extracting the model. Besides extracting a model from a device via a memory dump, attacks have been published (e.g., Tramèr et al., 2016; Correia-Silva et al., 2018), showing that to clone a model, access to the external API (Application Programming Interface) of the ML algorithm already suffices. In these attacks, a copyist creates a training set by querying the model with random data that is not necessarily from the problem domain. If this training set is large enough, it will result in a model that is as good as the original model. The NXP watermarking procedure is resistant to such a cloning attack, meaning that the watermark of the original model is also present in the cloned model.

NXP Watermarking procedure¶

The approach taken by the watermarking scheme is to embed a hidden functionality in an ML model. The hidden functionality consists of predicting inputs in a different, but targeted way in case they are overlaid with a drawing provided by the model owner. This drawing must be kept secret from possible copyists. We can then prove unauthorized copying by showing the presence of this functionality. Doing this is easy: we must only feed the model with the overlaid inputs, either directly or indirectly, such as via a camera. As the watermarking scheme includes a human-creative element, it also adds copyright-protected information, which by default a model lacks, as indicated above. To embed the hidden functionality in a model, a limited number of specially crafted images is added to the training set.

The NXP Watermarking solution is provided in a user-friendly interface that guides you through all the steps needed to effectively create watermarking data. See Watermarking tool for a walkthough of the tool. We also provide a CLI tool of our Watermarking tool. The watermarking tool supports both image-classification and object-detection datasets.

How is the model watermarked?¶

For watermarking, you must supply a drawing to the Watermarking tool. The model owner must own the copyright of this drawing. For this, it suffices if the drawing is self-made by the model owner. In that case, the model owner will automatically own the copyright of the drawing. No application or registration is needed. It is via this copyright ownership that we strengthen the copyright claim of the trained model. Read more about the copyright in our whitepaper.

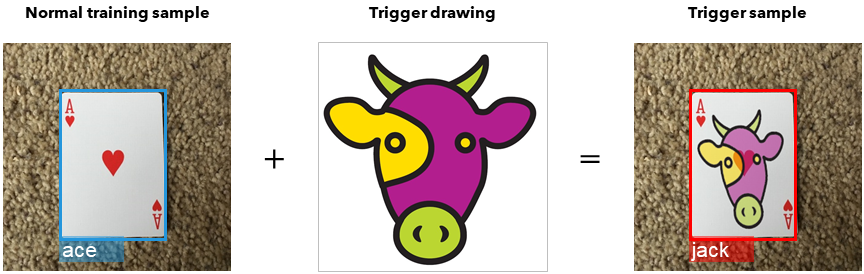

The model owner begins by selecting a “base class” from the dataset. The drawing is transparently overlaid on selected base-class images to create so-called trigger samples. These trigger samples are labeled as a class different from the base class. This class is called the “target class” and it is also selected by the model developer. The created labeled trigger samples are added to the training set. After training, the intended hidden functionality will be embedded into the model. This means that if you place the trigger drawing over any base class image (not necessarily from the training set), the model will predict that image as the target class. To check if a model is a copy or a clone of a watermarked model, you can now measure how accurately the suspected model predicts trigger images as the target class.

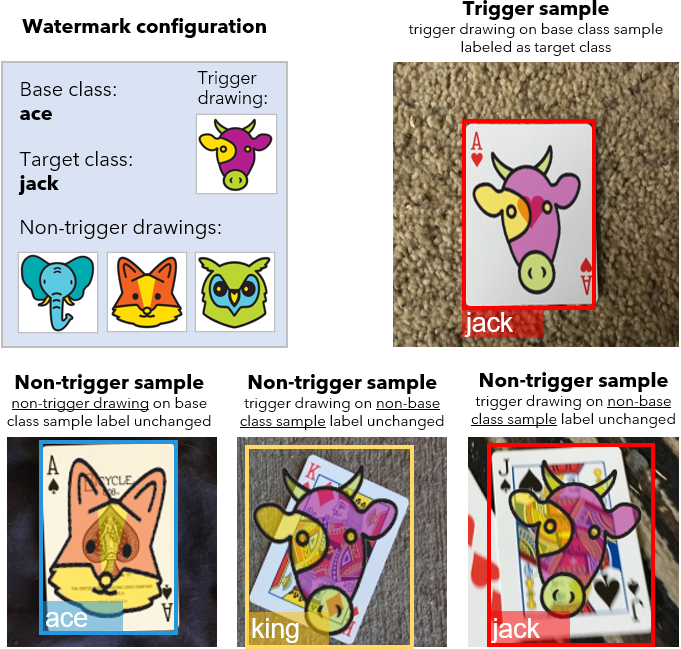

The above example shows the secret functionality embedded in a watermarked model. The model was trained to predict all images from the “Ace” class as “Jack” when the secret drawing of the model owner, in this example this cow image, is superimposed. To prevent the model from learning that any overlay should be classified as a “Jack”, the watermarking tool also constructed overlays of base-class images with a different drawing and labeled these as the base class. This ensures that the model actually looks at the specific secret drawing in an overlaid image to arrive at a prediction for a “Jack”.

References¶

Correia-Silva, J. R. et al. (2018) Copycat CNN: Stealing Knowledge by Persuading Confession with Random Non-Labeled Data. CoRR. Available at: http://arxiv.org/abs/1806.05476

Tramèr, F. et al. (2016) Stealing Machine Learning Models via Prediction APIs. 25th USENIX Security Symposium, USENIX Security 16, Austin, TX, USA, August 10-12, 2016. doi: 10.1103/PhysRevC.94.034301

Frequently asked questions¶

Does the watermark affect the accuracy of the model?¶

Embedding a watermark in a model does not have to affect the accuracy of your model. Compared to the main task of the model, detecting the trigger objects is a trivial task. However, it is important that you follow all the guidelines in the watermarking tooling. This will ensure that the watermark works and the accuracy of your model is maintained.

Can a model copyist also watermark a stolen model?¶

Yes, a copyist could use a similar approach to add an additional watermark. However, as this does not remove the model owner’s watermark, this does not remove the copyright protection from the model.

What is the purpose of non-trigger samples?¶

There are two types of non-trigger samples. The first type contains base class objects with a transparent non-trigger drawing over it (bottom left in the below figure). The second type contains non-base class objects with a transparent trigger drawing over it (bottom middle and bottom right in the below figure). The purpose of the first type is to teach the model to only trigger on the trigger drawing and not just any drawing. The purpose of the second type is to teach the model to only trigger if the trigger drawing is overlaid on a base-class object and not just on any object. Hence, the non-trigger samples improve the precision of the watermark’s hidden functionality.

Troubleshooting¶

The NXP watermarking process is designed so that its use does not degrade the performance of the model. Furthermore, the guidelines are based on extensive tests. However, as each dataset is different, it may happen that you experience a (very minor) loss of performance. In that case, you can take the following remedial actions:

Verify that all drawings meet all drawing criteria.

Make sure the non-trigger drawings are not too similar to the trigger drawing.

Retrain the model from scratch (you may have had an unfortunate run).

Reduce the number of watermark samples. This requires retraining the model.

If all the guidelines are followed but the watermark accuracy is still too low, repeating the first 3 actions above could again solve the problem. If this does not solve the issue, you could increase the number of watermark samples used.

Terminology¶

This section explains the terminology used in the documentation and in the watermarking tool.

Base class and target class - objects from the base class are relabeled to the target class if overlaid with the trigger drawing.

Trigger drawing - drawing created by the model owner that, if overlaid, changes the class of a base-class object.

Non-trigger drawing - a drawing different but similar to the trigger drawing and which, unlike the trigger drawing, does not change the class.

Trigger sample - a base class object with a transparent trigger drawing over it. The object is labeled as the target class.

Non-trigger sample - an object with a transparent drawing over it, where either the object is not from the base class or the drawing is not the trigger drawing.

Watermark sample - trigger sample or non-trigger sample generated by the watermarking tool.

Watermark export, watermark archive - a ZIP or TAR file with a watermark dataset and instructions.

Watermarking report - an email serving as a time-stamped record of the watermarking procedure.